Eliminate Input Lag on PC-Based Emulators:

Matching the Latency of the Original Device

NOTE: For advanced readers who are software developers.

Don’t know what “racing the beam” is? Read this Wired Magazine article about the Atari 2600 book.

This article is targeted for software developers who write emulators with raster-accuracy, line-accuracy or cycle-accuracy emulation. (8-bit PC emulators, arcade machine emulators, 1980s-era console emulators).

While programming a different software development project, I accidentally invented an technique that dramatically reduce input lag for emulators. This requires the help of emulator authors, however, to implement this brand new algorithm.

UPDATE: This is the emulator holy grail! Successful GroovyMAME experiment and WinUAE experiments!

Past Contributions by Blur Busters to Emulator Scene

In the past, Blur Busters has made a few contributions to the emulator scene, such black frame insertion (www.testufo.com/blackframes) which allows 120Hz monitors to gain better 60Hz motion clarity for emulators. In 2013, Blur Busters was the world’s first to popularize software-based black frame insertion (MAME article in 2013) and is now in EmuGen Wiki, RetroArch, WinUAE, and many other emulators.

Beam Racing: Lagless “VSYNC ON” Via Perfect Tearingless VSYNC OFF

Historically, it was not possible to synchronize the rasters of an emulator to the rasters of a real world display. You generated an emulator frame buffer all at once, then delivered it to the display.

However, with the advent of ultra-accurate raster-emulation and access to raster APIs on modern PCs, and emulators are now running cycle-exact raster emulations in their frame buffers. Now, computers have finally become simultaneously fast and accurate enough to approximately synchronize virtual rasters with the real-world rasters.

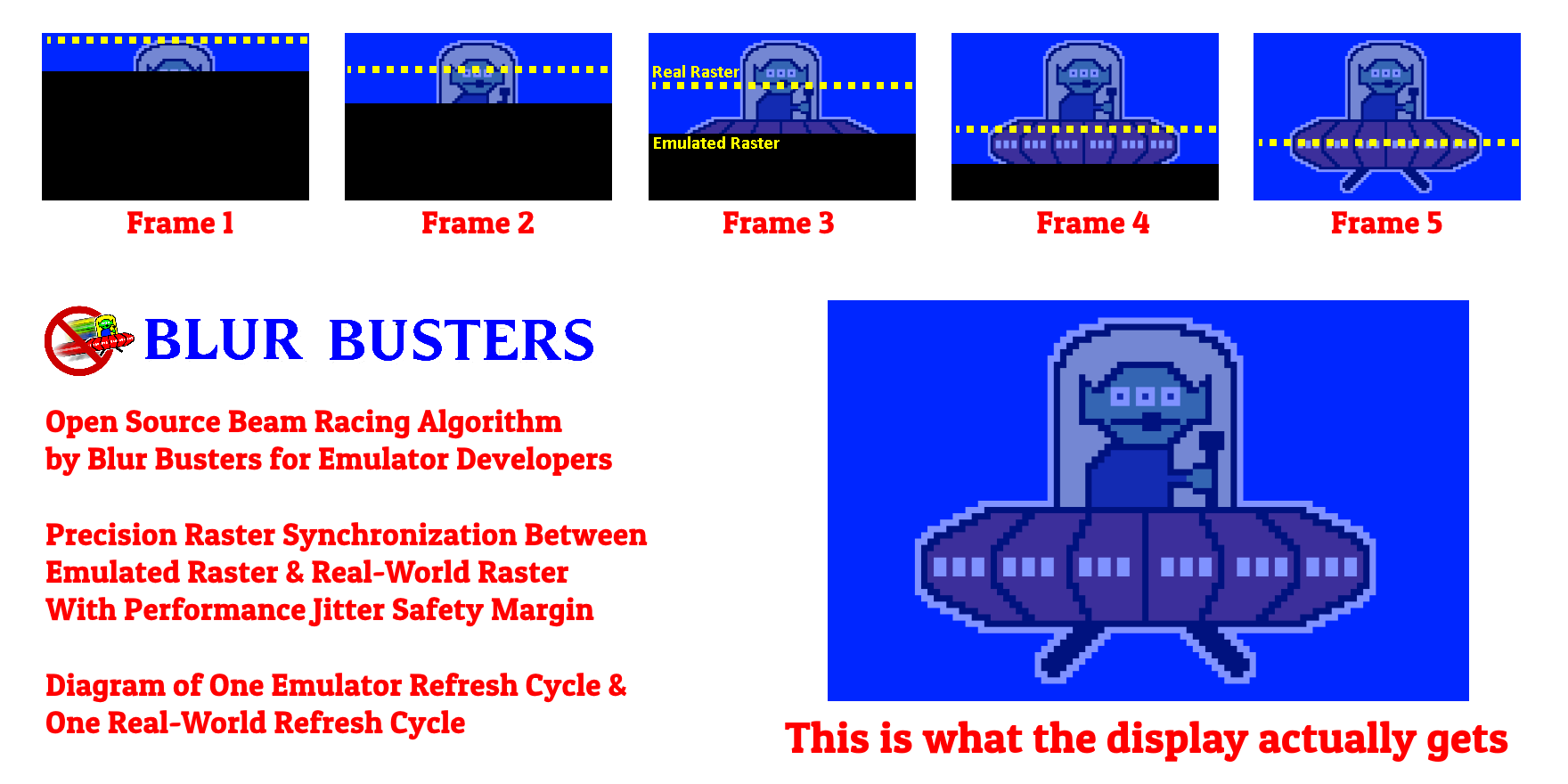

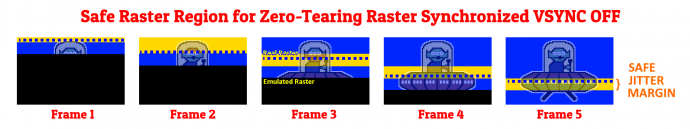

Here is a simplified diagram of “beam racing”:

(1/5th frame, but in reality, frame slices can as tiny as 1 or 2 scanlines!)

- Only ~10% overhead added to CPU.

- Tearing-less VSYNC OFF: Lagless VSYNC ON

- As long as emulated raster stays ahead of real raster, the black part of frame never appears

- Same number of pixels per second.

- Still emulating 1:1 emulated CPU.

- Still emulating the same number of emulator rasters

- Still emulating the same number of emulator frames per second (60 fps).

- Performance can jitter safely in the frame-slice height area, so perfect sync not essential.

- Lagless VSYNC ON achieved via ultra-high-buffer-swap-rate VSYNC OFF

- It’s only extra buffer swaps mid-raster (simulating a rolling-window buffer)

Little Performance Penalty on Modern Computers and Modern GPUs

Historically, imperfectly-synchronized computers were not fast enough to do this in a pratical way. However, they now are. I have discovered this is now finally possible at the sub-millisecond timescale, because:

- Modern computers now have microsecond-precise clocks available.

- Modern GPUs can pageflip simple low-resolution frame buffers at 10,000 frames per second during VSYNC OFF (0.1ms between flips).

- There are currently PC based API’s — RasterStatus.ScanLine as well as D3DKMTGetScanLine() that lets a developer poll for the current raster scan line that the GPU is currently outputting to the actual display.

- Or you can avoid ScanLine API’s by using precision time-offsets from a VSYNC timestamp.

- It’s possible to steer the exact location of a tearline — or even hide a tearline — using ultra-precise VSYNC OFF pageflips.

If you do a busyloop on RasterStatus.ScanLine and then pageflip (Direct3D Present()) followed by an immediate Flush(), then you can can control the exact location of the tearlines. On modern, fast lightly-loaded systems, running at high priority, software-based tearline-steering accuracy can be <0.1ms!

Due to this, I have come up with a new algorithm that piggybacks on all of this for essentially lagless emulation with Direct3D to CRTs and 60Hz LCDs.

Initial Proof of Concept Video

UPDATE: As of April 2nd, 2018, two emulators have now implemented this technique! Scroll to bottom.

I’ve created a proof of concept video of precision tearline control (without using RasterStatus.ScanLine — I simply do precision performance counter mathematics as an offset from VSYNC timestamps):

Source code is being released soon on github. Keep tuned.

100% Tearing-Free Raster-Following 5000fps+ VSYNC OFF

The VSYNC ON appearance can be successfully simulated by raster-synchronized VSYNC OFF, if you have access to the position of the real-world raster (or have access to a VSYNC heartbeat and can extrapolate time between them — useful for platforms that only gives you access to VBI timing).

With proper approximate raster synchronization, and ultra high frame rates, you have a form of lagless VSYNC ON. The tearlines completely disappear, if we pageflip at extremely precise intervals trailing behind the emulated raster.

Raster-synchronized 2000fps+ or 5000fps+ VSYNC OFF looks exactly like perfect VSYNC ON — but with no lag. As long as the processing fluctuations are kept in a tight range.

Low-flip-rates are still useful, e.g. 240fps at 60Hz, for 4-frameslice granularity that still reduces previously-lowest VSYNC ON lag by as much as a further 75%.

The concept is a ~5000 frames per second VSYNC OFF, with the buffer swap timings trailing behind the emulated raster (on a physical-screen-location basis — where scan line #240 midpoint of 480p emulator framebuffer would correspond to raster #540 of a 1080p display).

For math simplicity, let’s go with 2000fps VSYNC OFF (0.5ms raster lagbehind), even though I’ve seen fast graphics cards exceed 10,000 frames per second (0.1ms raster lagbehind) on emulator framebuffers.

For 2000fps VSYNC OFF with ~1ms input lag compared to real world machines or FPGA emulators:

- Recycle the same emulator framebuffer, plotting new pixels, one raster at a time.

- The emulator software checks RasterStatus.ScanLine or D3DKMTGetScanLine()

- The emulator runs 1/2000sec millisecond worth of emulation execution.

- The emulator rasterplots 1/2000sec worth of scanlines to the emulator framebuffer.

- The emulator raster runs 2/2000sec (1ms) physical scanhead relative to RasterStatus.ScanLine or D3DKMTGetScanLine.

- Copy the low-resolution incompletely-rasterplotted framebuffer to another framebuffer.

- Pageflip that copy immediately.

- Repeat all the above, 2000 times a second. (or more)

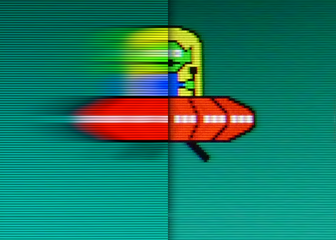

Tearing never appears, as long as the emulator raster is slightly ahead of the physical raster (in terms of screen physical position in inches — not number of scanlines).

The VSYNC OFF frame buffer swap is occuring on essentially duplicate already-scanned portions, so there’s no tearing visible, because the swap is occuring on a real-world scanline behind the virtualized raster.

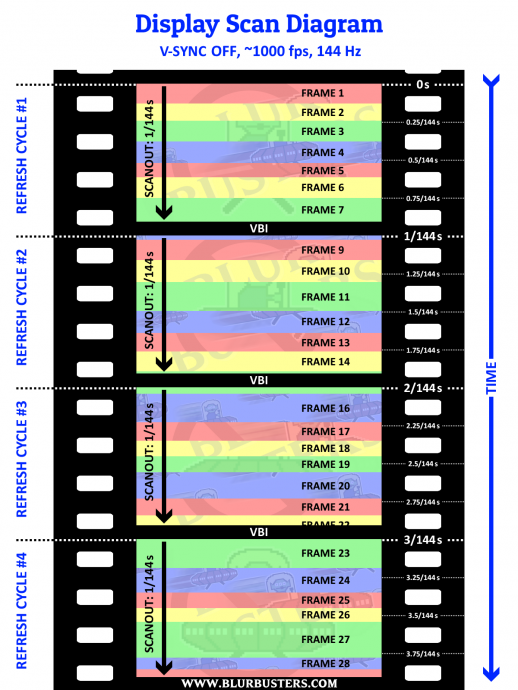

Think of it as a rolling-window line buffer (multi-scanlines) successfully de-facto achieved by ultra-high-frequency precision VSYNC OFF flipping on full frame buffers via ordinary Direct 3D APIs (see Scanout Diagram below).

The bottom line is that tearing never appears as long as the real world raster versus virtual raster sync stays within this time margin (e.g. 0.5ms jitter for the 2000 buffer swaps per second scenario). It just looks exactly like VSYNC ON. A virtually lagless VSYNC ON, for that matter.

Physical location, meaning scanline #120 of 240p (emulated raster) in emulator corresponds to scanline #540 of 1080p (real-world raster). A 1ms scan-ahead for the emulated raster would be approximately 1/16th of screen height for a 60Hz frame.

With some clock-accurate emulators, running in high-priority process mode, it should be possible to do scanheads as tight as 0.1ms (aka practically real-world machine matching latencies). This would depend on how high a frame rate a GPU can do on the emulator frame buffer.

Scan Diagram Example

To more easily conceptualize tearline-steering during VSYNC OFF, here is a typical scan diagram for 1000fps (imperfect frametimes) during VSYNC OFF.

The buffer swaps (page flips) — such as Direct3D Present() API calls — creates the tearlines corresponding quite darn near the screen location matching D3DKMTGetScanLine() or RasterStatus.ScanLine.

Since the emulator frame rate is low, the frame slice immediately above the new frame slice will simply be a duplicate, and buffer swaps in that region will have no tearline — VSYNC OFF swaps on identical frames (in our case, frame slices above the current physical raster) have NO tearline. So as long as the emulator raster stays below the real world raster (via a tight margin, e.g. 0.5ms or such), tearlines are never physically-scanned out, as the emulator is forever adding new data to the framebuffer, everytime the emulator keeps continually doing a VSYNC OFF buffer swap as new rasters keep getting plotted to the emulated frame buffer.

The distance between tearlines is a function of frametime (and the display signal’s scan rate — i.e. how quickly ScanLine increments at a constant rate). The varying distance between tearlines is the varying computer performance jitter.

Perfect sync (to the exact scanline) is impossible, but we don’t need perfect sync — since we’re doing a scanbehind approach with a specific safety margin of jitter (e.g. 0.1ms, 0.5ms, 1ms, or a specific configured jitter margin). As long as jitter is less than this margin, tearlines never becomes visible.

Perfect 60fps CRT motion, with the VSYNC ON look and feel, but without the input lag.

If you recycle the same partially rasterplotted emulator framebuffer, and pageflip that repeatedly — then to the current raster, it simply looks like you’re doing many duplicate pageflips — the incomplete rasterplotted data ahead of the realworld raster, never becomes visible, and thus, tearing never appears!

Tests show that 10,000fps VSYNC OFF (0.1ms-to-0.2ms scanbehind input lag) is possible on high-end graphics cards on high-end systems, when using low-resolution emulator framebuffers, without slowing down the emulator too much. Even with the faster HLSL frame buffers (fuzzy scanline emulation), >1000 VSYNC OFF buffer swaps per second is still possible (= approx 1ms scanbehind realworld raster versus emulated raster).

GPU overheads are extremely low, and blitting smaller framebuffers (e.g. 320×240, even 1920×1080) to the GPU takes little bandwidth and time. One can keep the GPU busy scaling the image at 10,000fps, while using all of a 3GHz+ CPU to keep doing cycle-accurate emulation.

Useful Purpose

- Targeted for 60Hz displays

- Algorithm suitable for any raster-exact emulators (Atari, C64, NES, SNES, etc).

- Faithful original input lag of original device for each physical location of the screen’s surface

- Compatible with scaling (e.g. 1080p, 4K)

- Perfect tearing-free VSYNC OFF

- Near synchronous raster scanning (within a safety jitter margin).

- Works with Direct3D

Considerations

- Only worthwhile for 50 Hz or 60 Hz displays, due to near-synchronous scanning.

- Requires approximate cycle-exact sync of emulator to real world (within safety jitter margin)

- Background processes will cause occasionally brief appearances of tearing due to emulator raster falling behind realworld raster.

- Requires graphics card with high pageflip rate capability (fast graphics RAM, not integrated GPU)

- Not useful for 120Hz or 240Hz displays (which can reduce emulator input lag via faster scanout)

- Can not be combined with software-based black frame insertion

Solution For Beginning to Beam-Race a New Refresh Cycle

One tricky challenge is that you need to begin plotting the emulator raster before RasterStatus.ScanLine begins incrementing. (Frustratingly, it stays at a fixed value during VBI).

The VBI length can be estimated by timing the length of time of RasterStatus.InVBlank (in microseconds). This is always microsecond exact, and this becomes your starting pistol for beam racing the emulator raster.

However, I have come up with a better method! Use WinAPI QueryDisplayConfig() to get the exact horizontal scan rate AND the vertical total (and VBI size). The VBI size can be computed by getting the Vertical Total and subtracting the vertical resolution, to get the size of the VBI. By knowing the scan rate (number of scan lines per second), you can get the exact VBI time to the sub-microsecond on any graphics card. For Linux systems, there are modelines which can be queried for the VBI total.

By knowing your computer’s exact VBI size to the microsecond — using standard API calls — it is possible to begin beam racing only a few scanlines ahead of a real raster, and go to huge number of frame slices (even 100+) instead of just a few frame slices.

UPDATE: Hardware raster polls are now optional. I’ve come up with a method that eliminate the need for access to a hardware raster poll. You only need to know timestamps of VSYNC to accurately extrapolate the raster position, since VSYNC OFF tearlines are raster-based positions as a time offset from the last VSYNC timestamp.

Implementations Now Exist

EDIT (March 17, 2018): Basic beam racing renderers have already arrived!

- Strip-based beam racing with virtual reality rendering

- Beam racing on Android in Oculus VrLib

- Beam racing experiment with MAME

- Beam racing now in WinUAE

I have heard from more emulator developers that they will be implementing the beam racing algorithm; they will be added here as time goes.

Alternative Low-Lag Emulation: Variable Refresh Rate Displays

Variable refresh rate displays such as FreeSync and GSYNC can massively reduce emulator input lag. The use of 60fps at 144Hz VRR allows the VSYNC ON look and feel, without the input lag. We have written much about VRR displays, including Blur Busters G-SYNC 101.

In fact, 60fps at 240Hz GSYNC can provide a spectacular “Quick Frame Transport” effect (4x faster scanout of a 60Hz refresh cycle). Essentially, the complete delivery of a 16.7ms refresh cycle in a mere 4.2 millisecond after a Direct3D Present() — which makes it possible for a software-based emulator to have less input lag than the original machine running on its original display!

However, that can have with different lag gradient effects (slightly lagged for top edge, much less lagged for bottom edge). If you don’t care about perfectly matching an original machine’s near-pixel-exact input lag for all pixels on a display, this can be a better and easier approach since emulator clock-exactness is not so critical.

This “beam racing” algorithm is applicable to both scanline-accurate emulators and cycle-exact emulators.

That said, the more complex raster scanline-follower approach can be mighty useful if building an ultra-low-lag emulation machine with a fixed-Hz display, including arcade CRTs too.

UPDATE: Beam-racing Variable Refresh Rate is Possible!

2018/04/10 UPDATE: We have found a way to beam-race variable refresh cycles (via hybrid GSYNC+VSYNC OFF as well as FreeSync+VSYNC OFF modes). The refresh cycle needs to be initially triggered by the first Present() or glutSwapBuffers() to begin the software-triggered display scanout, then you beam-race it with subsequent Present() / glutSwapBuffers() of additional lagless frameslices — treating the rest of the scanout of the refresh cycle as a normal VSYNC OFF refresh cycle.

Conclusion

I hope that some emulator authors decide to implement this lagless raster-following tearless VSYNC OFF algorithm. (Give us a shout-out about our algorithm if you do!)

If you are an emulator author, please drop by Blur Busters Forums at forums.blurbusters.com or drop an email to Mark at mark[at]blurbusters.com!